Published by Dimitri Vedeneev, Executive Director Secure AI Lead, Henry Ma, Technical Director, Strategy & Consulting, and Katherine Walsh, Senior Consultant, Strategy & Consulting on 24 March 2026

Organisations are moving rapidly in their adoption of AI, and the breadth and nature of associated risks are becoming clearer.

As AI adoption accelerates, organisations need practical and flexible governance frameworks to maintain consistent oversight, amidst the growing need to uplift controls and diverging regulatory expectations.

Our previous Secure AI blogs have explored how AI adoption opens up opportunities, but introduces operational risks, eight practical strategies to mitigate these risks, and key regulatory frameworks that have emerged.

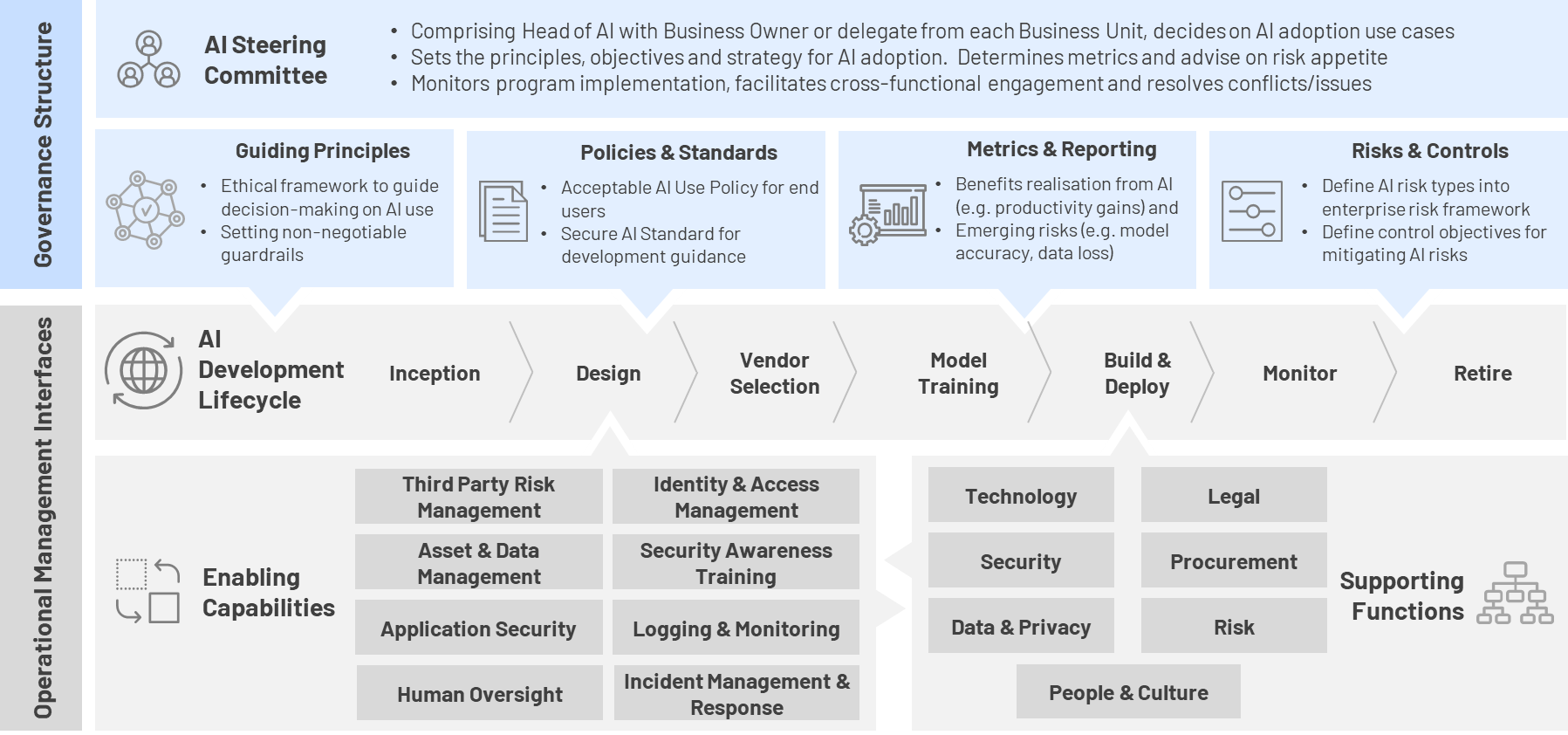

In this next part of the series, we propose a modular, technology-agnostic AI governance framework (see Figure 1) that organisations can use, along with five critical success factors for effective implementation to support the continuous and evolving adoption of AI safely, securely and responsibly.

Figure 1: AI Governance Framework

1. Establish a diverse AI steering committee

Given the breadth in which AI can be adopted and transform an organisation, a steering committee with representation from senior executives across all areas of a business can provide a more considered and well-rounded view of its impacts on functions such as business process, people, cyber security, technology, data, regulatory, ethics and societal.

One of the main roles of the AI steering committee is to evaluate and approve AI use cases. For example, prioritising use cases, weighing up their benefits and risks fairly and objectively, as well as the ability to quickly resolve any emerging conflicts between business functions or projects.

When selecting members of the steering committee, organisations should also consider whether they need to appoint an ‘AI lead’, or whether the responsibility should be made part of a senior executive’s existing portfolio.

While the current trend seems to be expanding the Chief Data Officer role to Chief Data & AI Officer, this may be a more transitional strategy as organisations continue to evaluate AI and their options.

2. Ground AI adoption on strong ethical principles

Given the current pace in which AI technology is evolving, an AI Governance Framework needs to be flexible enough to govern new use cases and risks we cannot foresee today, but anchored on several ethical principles that are unlikely to change.

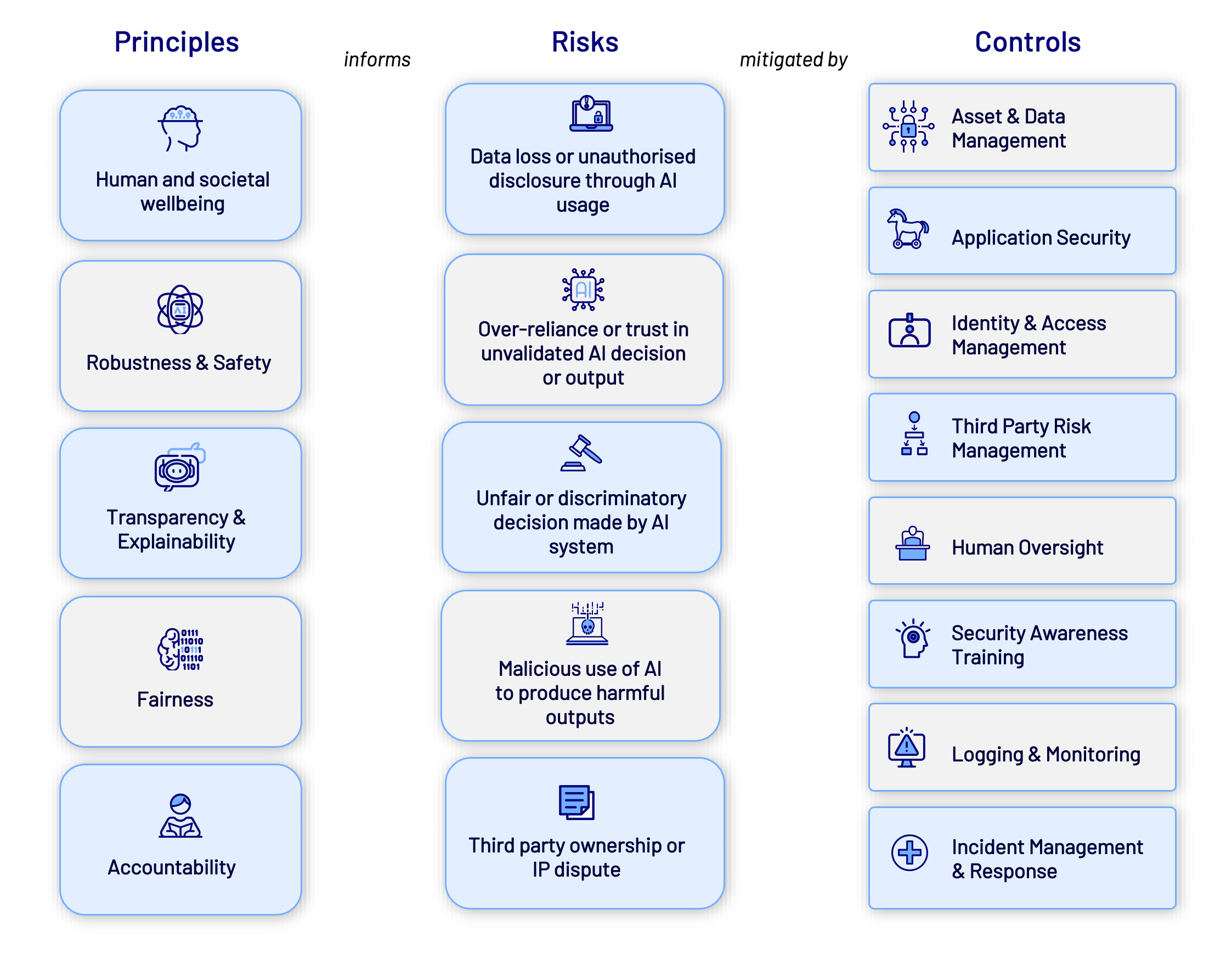

While many bodies, such as the Organisation for Economic Co-operation and Development (OECD) and Australian Federal and State governments have published different ethical principles, we find they can be largely distilled down to five:

These principles serve as invaluable north stars to help us identify risks in AI adoption and define what is correct, or incorrect.

For example, when aligning the risks we identified in our first blog over-reliance or trust in an unvalidated AI decision or output is a violation of the accountability principle, while malicious use of AI to produce harmful outputs violates the human and societal wellbeing principle.

Extending this traceability further, when considering the eight mitigation strategies explored in our second blog, the control objectives can be traced back to restoring one of these principles when the AI system goes wrong.

This same traceability also reveals an opportunity – when these principles are upheld by well-designed controls that are implemented early, organisations can move faster when adopting AI and safely scale with greater confidence.

Figure 2: AI Principles inform identified risks, which are mitigated by relevant controls

3. Keep AI policies and standards simple

The AI steering committee should consider leveraging and extending existing organisational policies and standards to bridge any gaps in AI adoption to maintain simplicity.

The two areas where this may be required are:

- In having an acceptable AI use policy for end users to define the permissible and prohibited use of AI. We often encounter diverse viewpoints on the stance of shadow AI and whether non-approved AI applications should be added to a blocklist or just monitored. This depends on the risk appetite of the organisation, the level of maturity of their AI capability (i.e. if they are already entrenched with selected AI vendor platforms, they are less open to other vendors) and compensating controls in place.

- The second is having a standard for developing AI systems. Ideally, as a start, this should codify the eight mitigating strategies discussed in the previous blog into more specific control requirements that must be in place for AI systems. Once an organisation becomes more mature, it should establish a minimum set of baseline controls that apply for all AI use cases and identify the criteria or tiering framework for when a use case would trigger more controls to be implemented.

4. Establish a consistent AI development lifecycle

With the principles, policies and standards now defined, operationalising an AI development lifecycle will help to ensure consistent treatment of each AI use case from inception to retirement.

Introduced in our earlier blog, the lifecycle is intended to be a flexible model that can be applied to all kinds of AI development or deployment – from something as small as an embedded AI feature within a SaaS application to a full-scale internally developed AI model and system.

Not all stages of the lifecycle may be applicable for every use case, rather, the model serves as an anchor point for the steering committee to maintain oversight, establish stage gates, coordinate between business functions and deploy controls consistently.

When it comes to maintaining and implementing or uplifting these controls as organisational capabilities, it will require concerted effort and collaboration across various functions in the organisation. This means having clearly defined and documented RACIs, processes and interfaces to ensure teams understand their role and responsibilities and can collaboratively work together to maintain a secure posture.

5. Choose meaningful metrics

As the AI development lifecycle is established and use cases are moving through the pipeline to production, choosing a set of meaningful metrics to measure the risk and benefits can help keep the organisation’s AI adoption aligned to its business strategy and risk exposure.

Some good metrics we have seen organisations start to measure and align to principles, risks and controls include:

- AI policy compliance rate: Percentage of AI applications that have completed a risk assessment or meet the AI security standard.

- Incident occurrence rate: Number of AI-related ‘near misses’ or failures (e.g. hallucination leading to wrong advice, prompt injection or data leakage).There is also value in measuring these separately as well to compare the prominence of each risk.

- Accuracy vs human oversight: Percentage of AI decisions that are reviewed or overridden by human operators.

- Explanation coverage: Percentage of AI-driven decisions where a human-readable explanation can be generated.

- Stakeholder trust score: A qualitative score from all users (e.g. internal employees and external customers) measuring their trust and confidence in the AI’s output.

Enabling Secure AI adoption

With AI capabilities expanding across organisational systems and workflows, the risks associated with their use extend beyond technical considerations to include regulatory, ethical, strategic, and operational impacts. Without clear decision-making structures and accountabilities defined, organisations face inconsistent practices, misaligned use cases, and avoidable security exposures.

CyberCX can help you define and implement an AI governance framework that is aligned to your organisational strategy, risk appetite and regulatory expectations. Through defined roles, clear policies, and consistent processes, we can support you in maintaining effective oversight and manage risks as AI use grows.